The content below is taken from the original ( How Einride scaled with serverless and re-architected the freight industry), to continue reading please visit the site. Remember to respect the Author & Copyright.

Industry after industry being transformed by software. It started with industries such as music, film and finance, whose assets lent themselves to being easily digitized. Fast forward to today, and we see a push to transform industries that have more physical hardware and require more human interaction, for example healthcare, agriculture and freight. It’s harder to digitize these industries – but it’s arguably more important. At Einride, we’re doing just that.

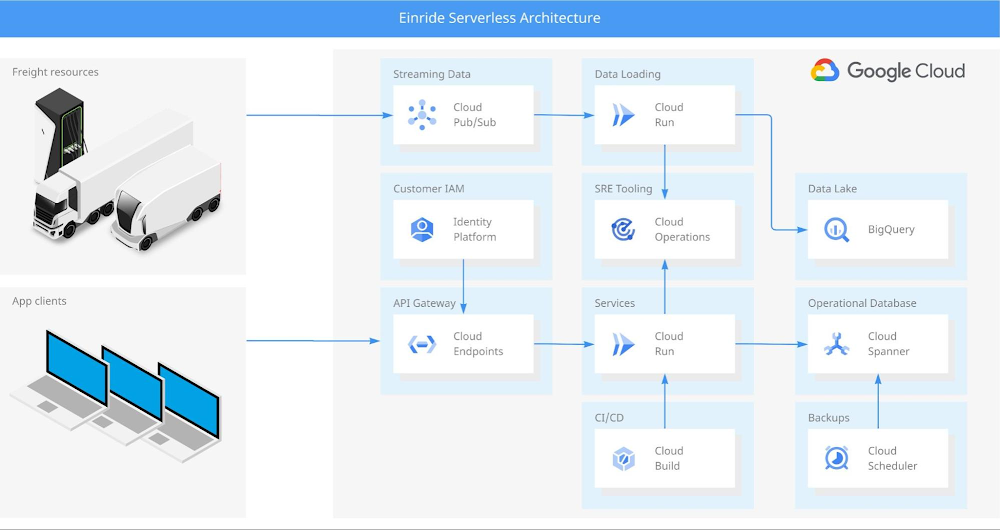

Our mission is to make Earth a better place through intelligent movement, building a global autonomous and electric freight network that has zero dependence on fossil fuel. A big part of this is Einride Saga, the software platform that we’ve built on Google Cloud. But transforming the freight industry is a formidable technical task that goes far beyond software. Still, observing the software transformations of other industries has shown us a powerful way forward.

So, what lessons have we learned from observing the industries that led the charge?

Lessons from re-architecting software systems

Most of today’s successful software platforms started in co-located data centers, eventually moving into the public cloud, where engineers could focus more on product and less on compute infrastructure. Shifting to the cloud was done using a lift-and-shift approach: one-to-one replacements of machines in datacenters with VMs in the cloud. This way, the systems didn’t require re-architecting, but it was also incredibly inefficient and wasteful. Applications running on dedicated VMs often had, at best, 20% utilization. The other 80% was wasted energy and resources. Since then, we’ve learned that there are better ways to do it.

Just as the advent of shipping containers opened up the entire planet for trade by simplifying and standardizing shipping cargo, containers have simplified and standardized shipping software. With containers, we can leave management of VMs to container orchestration systems like Kubernetes, an incredibly powerful tool that can manage any containerized application. But that power comes at the cost of complexity, often requiring dedicated infrastructure teams to manage clusters and reduce cognitive load for developers. That is a barrier of entry to new tech companies starting up in new industries — and that is where serverless comes in. Serverless offerings like Cloud Run abstract away cluster management and make building scalable systems simple for startups and established tech companies alike.

Serverless isn’t a fit for all applications, of course. While almost any application can be containerized, not all applications can make use of serverless. It’s an architecture paradigm that must be considered from the start. Chances are, an application designed with a VM-focused mindset won’t be fully stateless, and this prevents it from successfully running on a serverless platform. Adopting a serverless paradigm for an existing system can be challenging and will often require redesign.

Even so, the lessons from industries that digitized early are many: by abstracting away resource management, we can achieve higher utilization and more efficient systems. When resource management is centralized, we can apply algorithms like bin packing, and we can ensure that our workloads are efficiently allocated and dynamically re-allocated to keep our systems running optimally. With centralization comes added complexity, and the serveless paradigm enables us to shift complexity away from developers, as well as from entire companies.

Opportunities in re-architecting freight systems

At Einride, we have taken the lessons from software architecture and applied them to how we architect our freight systems. For example, the now familiar “lift-and-shift” approach is frequently applied in the industry for the deployment of electric trucks – but attempts at one-to-one replacements of diesel trucks lead to massive underutilization.

With our software platform, Einride Saga, we address underutilization by applying serverless patterns to freight, abstracting away complexity from end-customers and centralizing management of resources using algorithms. With this approach, we have been able to achieve near-optimal utilization of the electric trucks, chargers and trailers that we manage.

But to get these benefits, transport networks need to be re-architected. Flows in the network need to be reworked to support electric hardware and more dynamic planning, meaning that shippers will need to focus more on specifying demand and constraints, and less on planning out each shipment by themselves.

We have also found patterns in the freight industry that influence how we build our software. Managing electric trucks has made us aware of the differences in availability of clean energy across the globe, because – much like electric trucks – Einride Saga relies on clean energy to operate in a sustainable way. With Google Cloud, we can run the platform on renewable energy, worldwide.

The core concepts of serverless architecture — raising the abstraction level, and centralizing resource management — have the potential to revolutionize the freight industry. Einride’s success has sprung from an ability to realize ideas and then quickly bring them to market. Speed is everything, and the Saga platform – created without legacy in Google Cloud – has enabled us to design from the ground up and leverage the benefits of serverless.

Advantages of a serverless architecture

Einride’s architecture supports a company that combines multiple groundbreaking technologies — digital, electric and autonomous — into a transformational end-to-end freight service. The company culture is built on transparency and inclusivity, with digital communication and collaboration enabled by the Google Workspace suite. The technology culture promotes shared mastery of a few strategically selected technologies, enabling developers to move seamlessly up and down the tech stack — from autonomous vehicle to cloud platform.

If a modern autonomous vehicle is a data center on wheels, then Go and gRPC are fuels that make our vehicle services and cloud services run. We initially started building our cloud services in GKE, but when Google Cloud announced gRPC support for Cloud Run (in September 2019), we immediately saw the potential to simplify our deployment setup, spend less time on cluster management, and increase the scalability of our services. At the time, we were still very much in startup mode, making Cloud Run’s lower operating costs a welcome bonus. When we migrated from GKE to Cloud Run and shut down our Kubernetes clusters, we even got a phone call from our reseller who noticed that our total spend had dropped dramatically. That’s when we knew we had stumbled on game-changing technology!

In Identity Platform, we found the building blocks we needed for our Customer Identity and Access Management system. The seamless integration with Cloud Endpoints and ESPv2 enabled us to deploy serverless API gateways that took care of end-user authentication and provided transcoding from HTTP to gRPC. This enabled us to get the performance and security benefits of using gRPC in our backends, while keeping things simple with a standard HTTP stack in our frontends.

For CI/CD, we adopted Cloud Build, which gave all our developers access to powerful build infrastructure without having to maintain our own build servers. With Go as our language for backend services, ko was an obvious choice for packaging our services into containers. We have found this to be an excellent tool for achieving both high security and performance, providing fast builds of distro-less containers with an SBOM generated by default.

One of our challenges to date has been to provide seamless and fully integrated operations tooling for our SREs. At Einride, we apply the SRE-without-SRE approach: engineers who develop a service also operate it. When you wake up in the middle of the night to handle an alert, you need the best possible tooling available to diagnose the problem. That’s why we decided to leverage the full Cloud Operations suite, giving our SREs access to logging, monitoring, tracing, and even application profiling. The challenge has been to build this into each and every backend service in a consistent way. For that, we developed the Cloud Runner SDK for Go – a library that automatically configures the integrations and even fills in some of the gaps in the default Cloud Run monitoring, ensuring we have all four golden signals available for gRPC services.

For storage, we found that the Go library ecosystem around Cloud Spanner provided us with the best end-to-end development experience. We chose Spanner for its ease of use and low management overhead – including managed backups, which we were able to automate with relative ease using Cloud Scheduler. Building our applications on top of Spanner has provided high availability for our applications, as well as high trust for our customers and investors.

Using protocol buffers to create schemas for our data has allowed us to build a data lake on top of BigQuery, since our raw data is strongly typed. We even developed an open-source library to simplify storing and loading protocol buffers in BigQuery. To populate our data lake, we stream data from our applications and trucks via Pub/Sub. In most cases, we have been able to keep our ELT pipelines simple by loading data through stateless event handlers on Cloud Run.

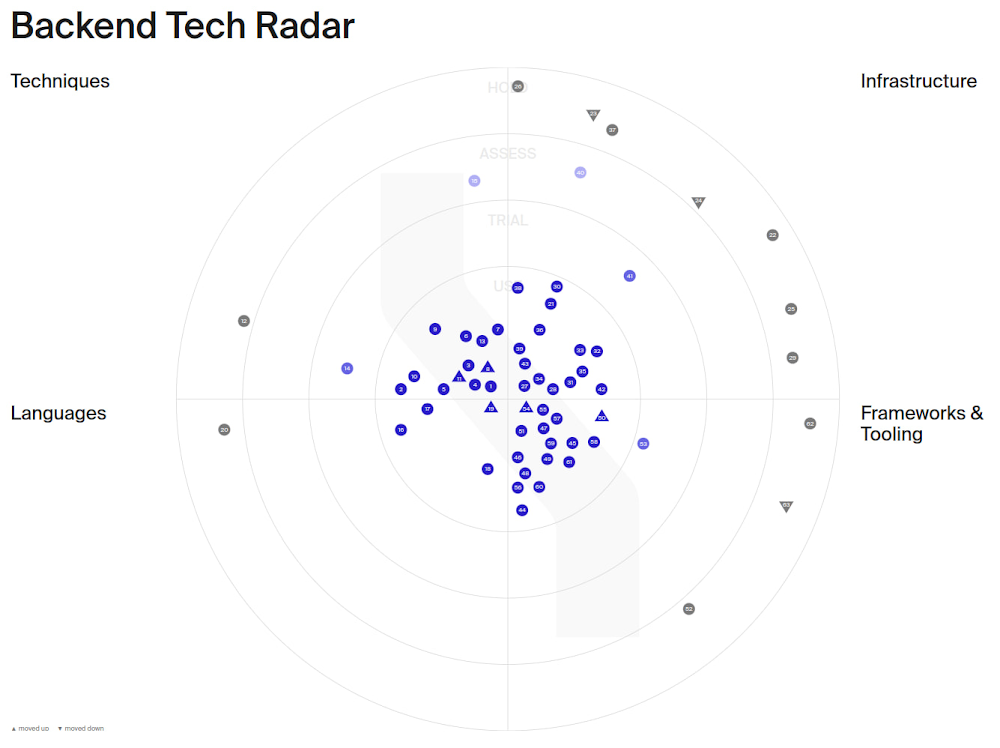

The list of serverless technologies we’ve leveraged at Einride goes on, and keeping track of them is a challenge of its own – especially for new developers joining the team who don’t have the historical context of technologies we’ve already assessed. We built our tech radar tool to curate and document how we develop our backend services, and perform regular reviews to ensure we stay on top of new technologies and updated features.

But the journey is far from over. We are constantly evolving our tech stack and experimenting with new technologies on our tech radar. Our future goals include increasing our software supply chain security and building a fully serverless data mesh. We are currently investigating how to leverage ko and Cloud Build to achieve SLSA level 2 assurance in our build pipelines and how to incorporate Dataplex in our serverless data mesh.

A freight industry reimagined with serverless

For Einride, being at the cutting edge of adopting new serverless technologies has paid off. It’s what’s enabled us to grow from a startup to a company scaling globally without any investment into building our own infrastructure teams.

Industry after industry is being transformed by software, including complex industries that have more physical hardware and require more human interaction. To succeed, we must learn from the industries that came before us, recognize the patterns, and apply the most successful solutions.

In our case, it has been possible not just by building our own platform with a serverless architecture, but also by taking the core ideas of serverless and applying them to the freight industry as a whole.